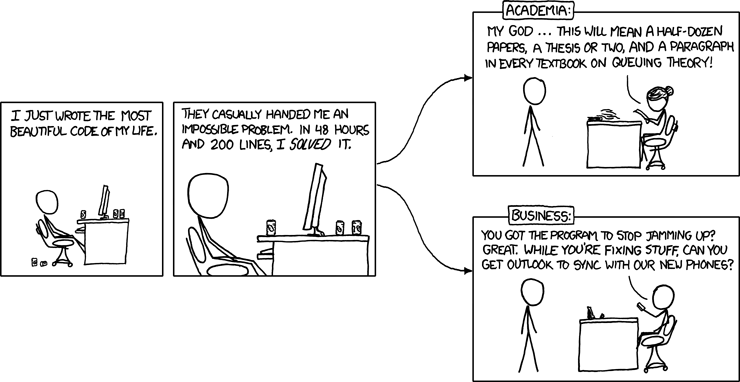

Whenever I hear politicians demanding that our education system has to change to better meet the needs of industry, I am reminded of this xkcd comic:

While this might be facetious, I am not being naive nor pontificating from an “ivory tower” — I think there is an important point to be made about reconciling the traditional aims of education and the modern needs of industry. I understand that there is an imperative to equip our graduates (or school leavers) to be useful members of the nation’s workforce. However, higher education should not be conflated with training — the onus should be on industry to train their workforce, especially if they require specific skillsets. Clearly we have to be aware of the requirements of industry in a more general sense, but I would much prefer to develop a graduate who is capable of applying their existing knowledge and learning new skills (e.g. Alvin Toffler: “The illiterate of the 21st century will not be those who cannot read or write, but those who cannot learn, unlearn and relearn.“), rather than one who only has specific (and perhaps increasingly transient) skills and understanding. Furthermore, trying to meet the immediate needs of industry can be problematic without taking into account the latency of the graduate “pipeline”; this is especially relevant to the ongoing debate regarding computer science education and fulfilling the needs of the IT industry.

But overall, I would like to ensure that as a nation we continue to promote education as being important in its own right — for enjoyment and self-betterment (as well as lasting for longer than the time spent in formal education) — rather than primarily as a means to determining a career path.

I think there’s two convincing arguments for education as most universities currently practice it. One is self-betterment, but I think the other argument is long-term vs short-term. If all universities did was train programmers in the current popular language, we’d have spent the last fifteen years churning out Java developers. But now Java is getting lambdas and closures. So it’s a good job we taught them some functional programming, eh! And what about another ten years, if the paradigm shifts again? I’m reminded of a pithy quote about fashion:

“The more ‘now’ you are, the more ‘then’ you’ll be.”

Universities should not be aiming to meet the exact needs of industry right now. If anything, universities should be aiming (as best they can) to meet the needs of industry in twenty years’ time. This constant demand by some companies to supply workers who know exactly what they want is a) trying to push the costs of training out of their business/industry and on to the government/individuals, and b) is indicative of a short-termism that has become all too prevalent in industry.

How about this:

I believe there is a quote by somebody very wise re “… Learners inheriting the Earth whilst the Learned find themselves beautifully equipped for a world that no longer exists …” or similar. What we (industry) need is to have well-rounded, questionning people who have a desire to continue to develop and learn throughout their careers. You are SO right about the short currency of any specific technical knowledge – and in any case the tech stuff is relatively straightforward to impart once grads are with an organisation. What I would want from schools and universities is to teach their students how to learn and encourage the innate desire to continue to do that throughout their lives. ‘Soft’ (i.e. the hardest to acquire) skills should be the focus of our education system.

Interesting. I found it took me time to bed in as an industrialist on the BCS Accreditation Panel. I would argue that I am an industrialist that gets academia more than most, but I am still very much learning the needs of Comp Sci teaching. So I was pleased to see that most of the comp sci department I have been involved with accrediting have known where to tell their tame industrialists where to get off and to ignore them where appropriate.

The BCS AAC does need more engaged industrialists as members from a diversity of industry sectors.

My experience is that U.K. comp sci degree schemes do a really good job of developing those who are motivated and capable. The challenge is for the less able and motivated. So Mr Willets is talking out of his ****.

When I began my first job (as a web developer), I learnt more in the first week about what I needed to do in my job than I had learnt at uni. This was not a bad thing – uni had taught me the skills I needed to be able to teach myself these things and to find out the answers without having to constantly pester my boss.

However, the lack of “learning for enjoyment and self-betterment” starts off in schools where the only thing that matters is getting a good grade in the exams. I find this in ICT all the time – because we don’t offer a GCSE, a lot of students feel like it’s a waste of time to be doing it in Year 9 at all, after all there’s no exam to work towards so why are they bothering to learn this stuff. Incredible. I don’t think you’ll change this mindset at university level until you change it at school level.

There is also a problem with the perception of people who work in IT (which oddly I was contemplating writing a blog about this morning) as the XKCD cartoon illustrates. It’s perfectly fine for people to slag off an elaborate piece of web programming on a website and dismiss it as useless if there is one error or their connection is running slowly, yet they would never dream of talking about a piece of art work, play etc. in such a way. I find it incredibly rude.

Tom, I think that the real problem is that the kind of education you’re espousing is not compatible with the questionable aim stated by the previous government of having 50% of school leavers progressing to study for a degree at a university.

It’s clearly not sustainable (or useful) to have such a large proportion of the potential future workforce studying for the sake of academic interest or for learnings sake – someone has to pay for that – and we all know students aren’t very pleased about that prospect right? In the end, the majority of people are really only interested in getting a degree so they can get a better job, not to become academics or out of pure curiosity (though I wholeheartedly agree there is definitely a place for that).

Personally I’d like to see universities aiming less at pleasing the masses and more at producing top-class academics (and in turn ground breaking research), and see more apprenticeships in industry supported by the government as a means of training young people in the kinds of skills that companies really need to survive in the labour marketplace.

I’ve got a different perspective: I no longer care what universities choose to teach. I think there’s many better alternatives available now, and it’s about time we started to look seriously at them.

A key point is that education is not the same as learning, and there’s too much of the former and not enough of the latter. I like to explain the difference as learning is something you do for yourself, whereas education tends to be done to you. We all need to do more for ourselves.

Is there anything that you could expect to get from a University education (but not necessarily get…), that you can’t get elsewhere? The only thing I can think of now is cheap beer, and a piece of paper of varying worth.

Here’s one alternative: a collection of individuals in some city etc offer to teach various topics from their knowledge and experience through small group sessions, for a nominal fee – not megabucks for rock-star speakers, just enough to compensate fairly for their time. Interested people sign up for these. What gets offered depends on available resources and demand from people in the area. Local networks and tech groups can help with advertising, cheap venues, ideas for topics. We cut through the expensive bureaucracy you see in institutions, and foster a more direct connection between those who want to learn and those who are willing to teach.

Does this give too much power to “market forces”, like towards what is currently trendy? Perhaps, though I believe the relatively unstructured approach will help people be more open-minded and discriminating about what they want to learn, hence fostering more debate and participation. (The situation in universities is not quite so flexible, eg can take a few years to change topics, and subject to outside forces too.)

A few people (including me) are trying to make this happen in the North East and Scotland. It’s not there yet, though there are some promising green shoots as people start to take on the ideas. Two interesting references are Morna Simpson’s FlockEdu (http://www.flockedu.co.uk), and Richard Powell’s write-up on running a workshop (http://www.kernelmag.com/scene/1495/giving-back-the-workshop-way/).

Looking wider, we can look to open source communities to share information, develop useful software skills, get experience of teamwork and collaboration, and build reputations. I’m involved in a few communities, and have learnt much of value this way.

For the record, I spent 10 years lecturing on CS at a northern university. Leaving was the best thing I ever did.

The distinction you draw between education and learning is interesting — I certainly agree that we should promote and encourage doing more for ourselves i.e. self-betterment.

However, I’m don’t think the traditional university model is outmoded or ineffective; I just think it will adapt to absorb new innovative models and modes of delivery for education (see, for example, edX).

An excellent, response, Paul. I agree. I think it sad, that some infuencers are using very effectively the mechanisms you describe, but for quite negative purposes – such as radicaliation or conditioning for acceptance of financial debt.

I agree that industry should be training to the specific skillsets they need but industry are not great at providing the individual with the best all round training. I’m no expert on HE but would agree that the purpose is not to meet the direct needs of industry.

Speaking from experience, I joined IBM in 1996 as a graduate trainee “IT Specialist” within the Global Services consulting group. As a Geography graduate I was quite surprised to find myself on a COBOL training course 4 weeks later but enjoyed it and just got on with it. I wasted a year of my life on the Millenium Bug, running UNIX code through scanners and not really understanding what I was doing. They gave us a taster of java on a course towards the end of the 2 year graduate programme but the only job opportunities were on COBOL / SQL projects.

The Computer Science graduates got a lot more choice over the jobs they got to work on and the training courses they were sent on than those of us who were not specialists.

Apart from playing with BigTrak in junior school I had no IT / ICT / Computer Science education at school. I didn’t learn Binary in GCSE Maths as those who did O-level 2 years earlier did.

I honestly think that if I’d had some exposure to Computer Science concepts at school, and some programming (without getting into the relative benefits of the two here) I would have had a much more successful IT career. I would not have been fobbed off quite so easily at the beginning that COBOL was a really useful thing to learn. I actually ended up spending 6 years as a fairly good systems analyst but knew that my ability to move into projects outside of my narrow experience of COBOL / SQL / DB2 was limited so left to become a teacher.

IBM got their money’s worth out of me. Trained me (and many others) in what they needed us to know for immediate commercial purposes which is what you’d expect. A background in CS would have given me the knowledge to take that a whole lot further.

This is a pet rant/conversation in our home. I have been fortunate enough to have taught in both Further and Higher education sectors but feel the line between the two is becoming increasingly blurred. Of course, practical and vocational skills are important but they should not be exclusively taught at the expense of receiving a wider academic education. University should absolutely be about promoting employability and students leaving with industry-relevant skills and knowledge, but it should primarily be about fostering a sense of enquiry and equipping students with the ability to react to a rapidly changing world (this is sort of related to something I am considering for a PhD project – improving general scientific literacy amongst students – regardless of their subject of choice!).

I graduated from university in 1997 with a degree in Geography with Computer Science and, like ljbunce, I ended up working in a number of different programming/computing roles. The practical knowledge I took with me from university helped me get a foot on the ladder but, particularly in that sector, things moved extremely quickly and there was a constant need to update skills on the job or via industrial training.

As others have already mentioned, before going into my degree, any experience of computer science I had was limited to evenings in front of my Sinclair Spectrum at the age of 7 or 8. I asked my Dad for games and he bought me some books on Basic and told me to make them myself. I’m too old to have benefitted from any significant computer science experience in school. Over the past few years, I have been lucky enough to have some experience of working with schools and Brownies, e.g. programming ‘Mars Rovers’ using Lego Mindstorms robots (frustratingly, this has often been with ‘gifted and able’ students when it would be nice to be working more with other students). Fortunately, it’s a very different beast these days, although I appreciate there is some way to go.

My husband is still in computing, albeit academia. He studied for his undergraduate degree and MSc in Greek universities. In Greek academia, in addition to the relevant computing modules, students are also expected to take additional modules in ancient Greek, modern languages, philosophy and academic skills. I think this is a great approach and it has certainly shaped the professional he is today, but it is one I fear would be increasingly difficult to implement within the context of UK academia and its current pressures. I would dearly love to see it, though.

Thank you for all of the interesting comments, there seems to be a fairly consistent theme!

FYI, this blog post formed the basis for this week’s THE Scholarly Web.

I would agree, if the aim of the majority of people studying CS was to learn CS, but the data suggests that at least half study CS because they want to be software developers. What’s missing is a viable vocational route, which would naturally refine the CS audience to those who are genuinely interested in CS. Everybody happy 🙂

And I’m getting a little tired of this “vocational = learning the latest popular programming languages and tools”. Much of what we know about software development has been known about since the 1960’s. And, yes, there’s a lot of theory in software development, too. I could fill several degree courses with the things I’ve had to learn during a 20 year career, and the specific technologies are only a small part of that.