In this new monthly blog post series — “A Month In Data” — I have curated another set of interesting articles, links and resources that I have come across this month relating to data, algorithms and policy: from data science, AI and machine learning, through to ethics, society and governance. As before, alongside the main list — which is presented in no specific order or precedence — I also offer a set of short links to posts, academic papers and other relevant resources.

Part III: October 2017

In this third set of posts, we have everything from machine learning, neural nets and the risks of AI, through to gerrymandering, the challenges of creating fantasy geographies, and computer vision in porn:

-

Report: Growing the artificial intelligence industry in the UK

This independent review for the UK Government, carried out by Professor Dame Wendy Hall and Jérôme Pesenti reports on how the AI industry can be grown in the UK. The vision is for the UK to become the best place in the world for businesses developing and deploying AI to start, grow and thrive, to realise all the benefits the technology offers. -

Your Data is Being Manipulated

This article presents remarks by Data & Society founder and president danah boyd for her keynote at the 2017 Strata Data Conference in New York City. In short, we need to reconsider what security looks like in a data-driven world: gaming the system, vulnerable training sets, through to building technical antibodies. If you are building data-driven systems, you need to start thinking about how that data can be corrupted, by whom, and for what purpose. -

The Real Risks of Artificial Intelligence

Remarkably, those who use the term “artificial intelligence” have not really defined that term. Even now, some AI experts say that defining AI is a difficult (and important) question—one that they are working on. “AI” remains a buzzword, a word that many think they understand but nobody can define. Recently, there has been growing alarm about the potential dangers of artificial intelligence; famous giants of the commercial and scientific world have expressed concern that AI will eventually make people superfluous. However, application of AI methods can lead to devices and systems that are untrustworthy and sometimes dangerous. -

How Computers Turned Gerrymandering Into a Science

Gerrymandering used to be an art, but advanced computation has made it a science. Wisconsin’s Republican legislators, after their victory in the census year of 2010, tried out map after map, tweak after tweak. They ran each potential map through computer algorithms that tested its performance in a wide range of political climates. The map they adopted is precisely engineered to assure Republican control in all but the most extreme circumstances.

-

Data is not the new oil

In contrast to recent editorials in The Economist, Amol Rajan argues that unlike oil, which is finite, data is a super-abundant resource in a post-industrial economy. There are such important differences between data today and oil a century ago that the comparison, while catchy, risks spreading a misunderstanding of how these new technology super-firms operate — and what to do about their power. -

China to build giant facial recognition database to identify any citizen within seconds

China is building the world’s most powerful facial recognition system with the power to identify any one of its 1.3 billion citizens within three seconds. The goal is for the system, which will be connected to surveillance camera networks, to be able to match someone’s face to their ID photo with c.90% accuracy. Alongside the technical limits of facial recognition technology and the challenges of the large population base, this raises significant questions about privacy and personal data. -

PornHub uses computer vision to ID actors, acts in its videos

They say porn adopts new technologies first; PornHub — a site which receives 80 million visitors a day — found that its old, antiquated methods of tagging videos by hand was not sufficient. Their computer vision system can identify specific actors in scenes and even identifies various sex acts, positions and “attributes”; they have used the model on c.500,000 featured videos which includes user submitted and they plan to scan the whole library in the beginning of 2018. -

London’s Hidden Tunnels Revealed In Amazing Cutaways

The layout of London can only be fully understood if we examine it in three dimensions; this article takes a look at some of the capital’s greatest cutaway diagrams.

-

Tolkien’s Map and the Perplexing River Systems of Middle-earth

This article articulates the challenges of creating fantasy geographies: just as Tolkien’s novels have had a massive influence on epic fantasy as a genre, his map is the bad fantasy map that launched a thousand bad fantasy maps—many of which lack even his mythological fig leaf to explain the really eyebrow-raising geography. Alongside the incomprehensible courses of the rivers, also check out the messed-up mountains of Middle-earth. -

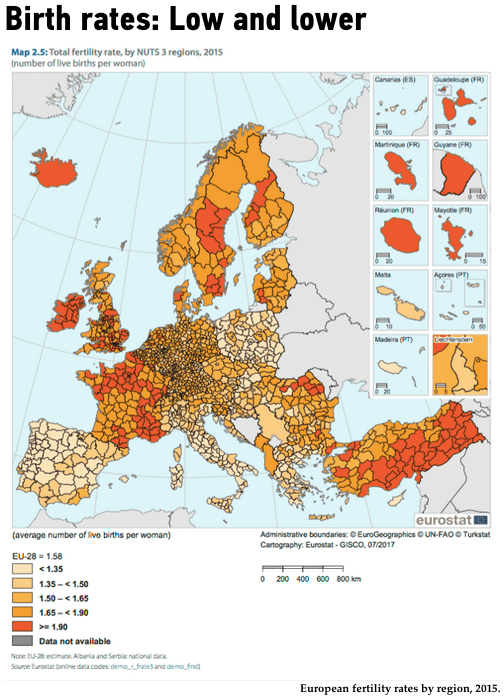

Mapping Where Europe’s Population Is Moving, Aging, and Finding Work

Europe’s population is on the move, and a new report by Eurostat (the statistical office of the EU) suggests exactly where and why: younger people are fleeing rural areas, migrating northward, and having fewer children — look at how that’s changing the region.

-

MIT researchers trained AI to write horror stories based on 140,000 Reddit posts

Shelley is an AI program that generates the beginnings of horror stories, and it’s trained by original horror fiction posted to Reddit. Designed by researchers from MIT Media Lab, Shelley launched on Twitter in October; the team behind Shelley is hoping to learn more about how machines can evoke emotional responses in humans. (you can also enjoy these masterpieces generated by neural networks: this spellbinding new Harry Potter chapter ghostwritten from being trained on all seven books and this “exact average” script for the comedy show Scrubs) -

Fooling Neural Networks in the Physical World with 3D Adversarial Objects

Neural network based classifiers reach near-human performance in many tasks, and they’re used in high-risk, real world systems. Yet, these same neural networks are particularly vulnerable to adversarial examples, carefully perturbed inputs that cause targeted misclassification. This group (see papers) has developed an approach to generate 3D adversarial objects that reliably fool neural networks in the real world, no matter how the objects are looked at.

You might also like…

- Open data from the Large Hadron Collider sparks new discovery

- New media, familiar dynamics: academic hierarchies influence academics’ following behaviour on Twitter

- Save the Data: Future-proofing data journalism

- America’s unique gun violence problem, explained in 17 maps and charts

- Cyclist’s Cardiff route makes face on Strava app map

(also check out Part I and Part II in the A Month In Data series)

One thought